The highest-leverage AI Act move for most teams is not legal deep-dives. It’s: knowing what AI you actually run (use cases, data, tools, owners, control level). If you hit 2 August 2026 without an AI inventory, you won’t “become compliant later”. You’ll drown in unknowns.

Primary sources: when does what apply?

- EUR‑Lex summary for Regulation (EU) 2024/1689: https://eur-lex.europa.eu/EN/legal-content/summary/rules-for-trustworthy-artificial-intelligence-in-the-eu.html

- Applies from 2 August 2026, with exceptions (incl. 2 Feb 2025 and 2 Aug 2025).

- AI Act Service Desk (Art. 113, “Entry into force and application”): https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-113

Not legal advice. This is implementation/process guidance.

Pain hook: in 2026 nobody asks “do you use AI?” — they ask “where exactly, and how do you control it?”

In real mid-market setups:

- AI sits inside SaaS tools, browser extensions, “test accounts”, automations.

- Data flows through email, CRM, tickets, files — sometimes with PII.

- Decisions are “semi-automated” (“AI drafts — humans still click send fast”).

The risk isn’t the model. The risk is missing visibility + missing evidence that you’re in control.

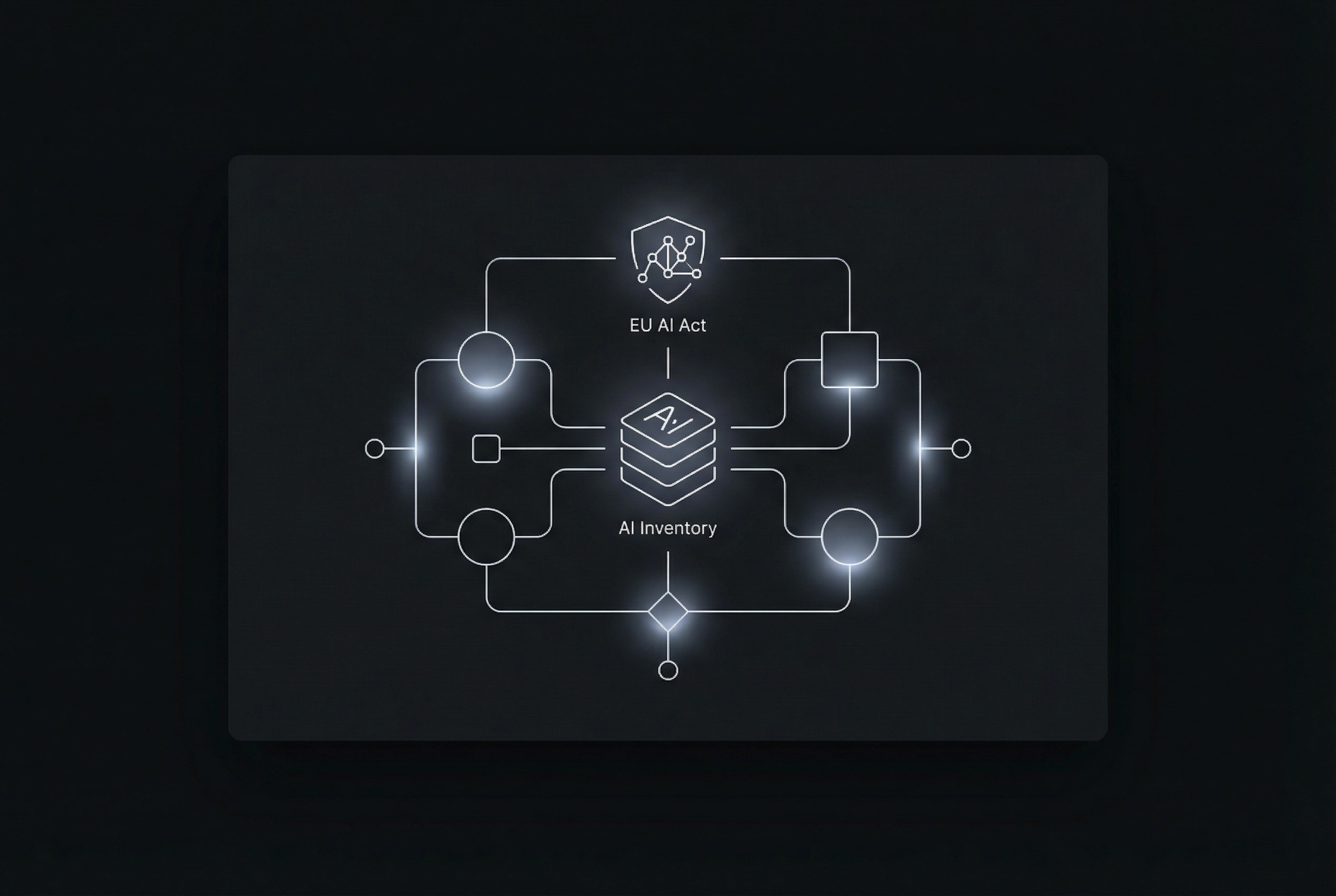

The 80/20 system: an AI inventory as a small internal mini app

An AI inventory is not a spreadsheet that dies after two weeks. It’s a small internal app with three jobs:

- capture (what AI use cases exist?)

- classify (control level + risk flags)

- prove (evidence + audit trail)

Minimum data model (pragmatic):

- use case name + goal (one sentence)

- owner (business) + reviewers (IT/security/DPO)

- input data types (public/internal/confidential/PII)

- tool/provider + hosting/region (if known)

- output type (internal/external) + impact if wrong

- control level (draft/assist → partial automation → critical)

- logging/retention (what is stored for how long?)

- evidence links/uploads (DPA, DPIA artefacts, approvals)

What you do NOT need (and what you do)

Not needed (to start):

- perfect legal classification on day 1

- 60-page policies

- a “Center of Excellence” org chart

What you do need:

- one front door (all AI use cases land in one place)

- ownership (who is accountable? who reviews?)

- gates (when can pilots start, when not?)

- evidence (why did you approve this?)

Checklist 1: AI inventory fields (copy/paste)

- Use case name + goal (one sentence)

- Owner (business) + backup

- User group (who uses it?)

- Tool/provider/model (if known)

- Input sources (email/CRM/files/DB/API)

- Data types: public / internal / confidential / PII

- Output: internal vs external (customer/partner)

- Human-in-the-loop (where must a human approve?)

- Automation level (assist / partial process / decision)

- Logging: what’s logged? (inputs/outputs/prompts/IDs)

- Retention: how long? who can access?

- Risk flags (e.g., people impact, PII, external)

- Evidence: links/files (DPA, DPIA, approvals)

Checklist 2: “AI Act ready” in 14 days (realistic)

- Days 1–2: collect 15–30 use cases (workshop + ask for shadow AI)

- Days 3–4: lock the data model + roles (owner/reviewer)

- Days 5–7: build the review flow (submitted → review → pilot → approved/rejected)

- Days 8–9: evidence vault + audit trail (who approved what when)

- Days 10–11: triage wizard (risk flags + default control levels)

- Days 12–14: pilot playbook (do/don’t, data rules, logging) + team briefing

CTA: we build the AI inventory mini app (no overengineering)

Send us:

- your top 10 processes where AI is “somehow” used

- the tools currently in the wild (including unofficial)

- who performs IT/security/DPO reviews

We deliver in 7–14 days:

- an internal AI inventory mini app

- a review flow + audit trail

- templates for typical mid-market use cases (email assist, support drafts, document workflows)